Appendix

Texts

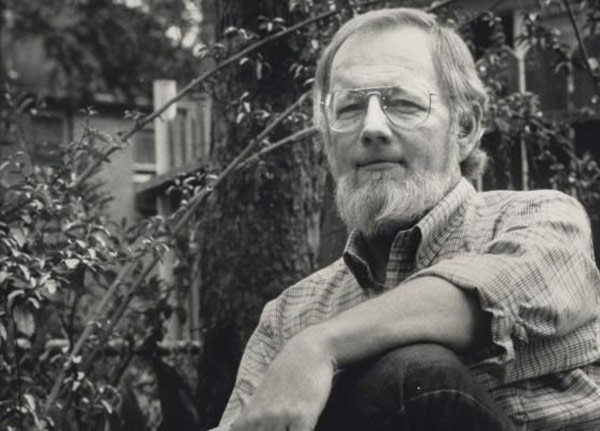

Donald Barthelme

“I have to admit we are mired in the most exquisite mysterious muck.

This muck heaves and palpitates. It is multi-directional and has a mayor.”

“You may not be interested in absurdity, but absurdity is interested in you.”

Some of Us Had Been Threatening Our Friend Colby

A brief work about etiquette and how to act in society.

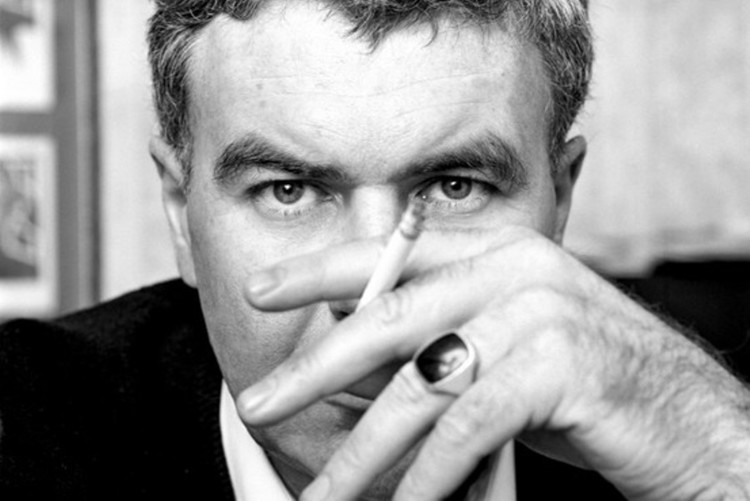

Raymond Carver

“It ought to make us feel ashamed when we talk like we know what we’re talking about when we talk about love.”

“That’s all we have, finally, the words, and they had better be the right ones.”

What We Talk About When We Talk About Love

The text we use is actually Beginners, or the unedited version. A drink is required in order to read it with the proper context. Probably several. No. Definitely several.

Billy Dee Shakespeare

“It works every time.”

These old works have pretty much no relevance today, and are mostly forgotten by everyone except humanities faculty. The analysis of them depicted in this document is pretty much definitive, and leaves little else to say regarding them, so don’t bother reading them if you haven’t already.

R

Up until even a couple years ago, R was terrible at text. You really only had base R for basic processing and a couple packages that were not straightforward to use. There was little for scraping the web. Nowadays, I would say it’s probably easier to deal with text in R than it is elsewhere, including Python. Packages like rvest, stringr/stringi, and tidytext and more make it almost easy enough to jump right in.

One can peruse the Natural Language Processing task view to start getting a sense of what all is available in R.

The one drawback with R is that most of the dealing with text is slow and/or memory intensive. The Shakespeare texts are only a few dozen and not very long works, and yet your basic LDA might still take a minute or so. Most text analysis situations might have thousands to millions of texts, such that the corpus itself may be too much to hold in memory, and thus R, at least on a standard computing device or with the usual methods, might not be viable for your needs.

Python

While R has done a lot to catch up, more advanced text analysis techniques are developed in Python (if not lower level languages), and so the state of the art may be found there. Furthermore, much of text analysis is a high volume affair, and that means it will likely be done much more efficiently in the Python environment if so, though one still might need a high performance computing environment. Here are some of the popular modules in Python.

- nltk

- textblob (the tidytext for Python)

- gensim (topic modeling)

- spaCy

A Faster LDA

We noted in the Shakespeare start to finish example that there are faster alternatives than the standard LDA in topicmodels. In particular, the powerful text2vec package contains a faster and less memory intensive implementation of LDA and dealing with text generally. Both of which are very important if you’re wanting to use R for text analysis. The other nice thing is that it works with LDAvis for visualization.

For the following, we’ll use one of the partially cleaned document term matrix for the Shakespeare texts. One of the things to get used to is that text2vec uses the newer R6 classes of R objects, hence the $ approach you see to using specific methods.

library(text2vec)

load('data/shakes_dtm_stemmed.RData')

# load('data/shakes_words_df.RData') # non-stemmed

# convert to the sparse matrix representation using Matrix package

shakes_dtm = as(shakes_dtm, 'CsparseMatrix')

# setup the model

lda_model = LDA$new(n_topics = 10, doc_topic_prior = 0.1, topic_word_prior = 0.01)

# fit the model

doc_topic_distr = lda_model$fit_transform(x = shakes_dtm,

n_iter = 1000,

convergence_tol = 0.0001,

n_check_convergence = 25,

progressbar = FALSE)INFO [2018-03-06 19:16:15] iter 25 loglikelihood = -1746173.024

INFO [2018-03-06 19:16:16] iter 50 loglikelihood = -1683541.903

INFO [2018-03-06 19:16:17] iter 75 loglikelihood = -1660985.396

INFO [2018-03-06 19:16:17] iter 100 loglikelihood = -1648984.411

INFO [2018-03-06 19:16:18] iter 125 loglikelihood = -1641481.467

INFO [2018-03-06 19:16:19] iter 150 loglikelihood = -1638983.461

INFO [2018-03-06 19:16:20] iter 175 loglikelihood = -1636730.733

INFO [2018-03-06 19:16:20] iter 200 loglikelihood = -1636356.883

INFO [2018-03-06 19:16:21] iter 225 loglikelihood = -1636487.222

INFO [2018-03-06 19:16:21] early stopping at 225 iteration [,1] [,2] [,3] [,4] [,5] [,6] [,7] [,8] [,9] [,10]

[1,] "prai" "hear" "ey" "love" "word" "natur" "night" "god" "friend" "death"

[2,] "honor" "madam" "sweet" "dai" "letter" "fortun" "fear" "dai" "hand" "grace"

[3,] "heaven" "bring" "fair" "true" "hous" "world" "ear" "england" "nobl" "soul"

[4,] "life" "sea" "heart" "wit" "prai" "power" "sleep" "crown" "word" "live"

[5,] "matter" "bear" "light" "fair" "sweet" "poor" "death" "war" "stand" "blood"

[6,] "honest" "seek" "desir" "live" "husband" "set" "dead" "arm" "rome" "life"

[7,] "fellow" "heard" "beauti" "youth" "woman" "nobl" "bid" "majesti" "honor" "dai"

[8,] "hear" "lose" "black" "heart" "reason" "truth" "bed" "fight" "leav" "hope"

[9,] "heart" "strang" "kiss" "marri" "hand" "leav" "mad" "sword" "deed" "heaven"

[10,] "friend" "sister" "sun" "night" "talk" "command" "hand" "heart" "tear" "die" [1] 7# top-words could be sorted by “relevance” which also takes into account

# frequency of word in the corpus (0 < lambda < 1)

lda_model$get_top_words(n = 10, topic_number = 1:10, lambda = 0.2) [,1] [,2] [,3] [,4] [,5] [,6] [,7] [,8] [,9] [,10]

[1,] "honest" "madam" "ey" "love" "letter" "natur" "ear" "england" "rome" "bloodi"

[2,] "beseech" "sea" "cheek" "youth" "merri" "report" "sleep" "majesti" "deed" "royal"

[3,] "knave" "water" "black" "wit" "woo" "spirit" "beat" "field" "banish" "graciou"

[4,] "warrant" "sister" "wretch" "signior" "jest" "judgment" "night" "uncl" "countri" "high"

[5,] "glad" "women" "flower" "count" "finger" "worst" "air" "march" "citi" "subject"

[6,] "action" "hair" "sweet" "lover" "choos" "author" "soft" "lieg" "son" "sovereign"

[7,] "worship" "lose" "vow" "danc" "ring" "qualiti" "knock" "fight" "rise" "foe"

[8,] "matter" "entreat" "mortal" "song" "horn" "virgin" "poison" "battl" "kneel" "flourish"

[9,] "fellow" "seek" "wing" "paint" "bond" "wine" "shake" "harri" "fly" "king"

[10,] "walk" "passion" "short" "wed" "troth" "direct" "move" "crown" "wert" "tide" Given that most text analysis can be very time consuming for a model, consider any approach that might give you more efficiency.